Performance and Cost Analysis of an Embedded Accelerator Platform for CNN Workloads

Abstract

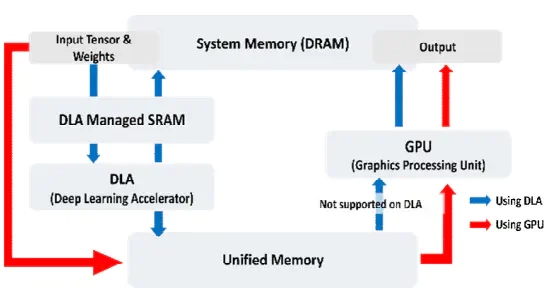

Modern artificial intelligence (AI) applications operate on a wide range of systems. Researchers have presented hardware and software optimizations to process AI applications efficiently. However, in embedded systems with limited resources, it is challenging to directly apply the optimizations designed for general-purpose computing systems. This study compares general-purpose accelerators (GPUs) and AI-specialized accelerators (DLAs) in embedded platforms to provide criteria for selecting the appropriate processors for convolutional neural network (CNN) models. Our experiment results show that DLAs demonstrate up to 4.5 times higher power efficiency compared to GPUs. On the other hand, DLAs exhibit lower performance in terms of throughput, latency, and energy efficiency. Overall, this study presents that CNN models can be optimized for different target embedded processors based on the constraints of embedded system environments.