Audio Compression Accelerator Design for Improving the Response Time of AI Speakers

Abstract

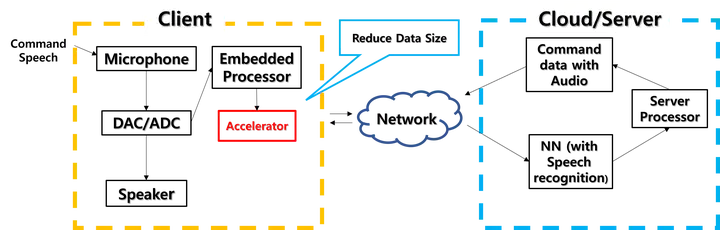

Response time is one of the critical performance factors of artificial intelligence (AI) speakers. The internet network delays and the processing time on cloud server infrastructure dominate the response delays of AI speakers. The network delay is proportional to the size of packets that include the recorded queries. Normally this recorded sound data is not compressed since compression processes can be a heavy burden for the wimpy processors embedded in AI speakers. In this work we design an audio compression accelerator which can reduce the packet size of user queries. We implement the proposed accelerator on the FPGA-based SoC development board. Our evaluation reveals that the overall response time of an AI speaker is effectively reduced with the audio compression accelerator.